April 2026

Comparing Opus 4.7 and GPT-5.5

on Excel

Two generation jumps, two different stories. GPT-5.5 is a clear step up from 5.4 — more accurate and faster at every level, and now the leading model for hard spreadsheet work. Opus 4.7 is a closer call against 4.6: noticeably better on long, complex tasks when pushed to think hard, but a hair worse on easy tasks and subtly different in feel. On everyday work, every top model lands within 1.8% of the others. Here's the data.

Nico Christie

April 23, 2026

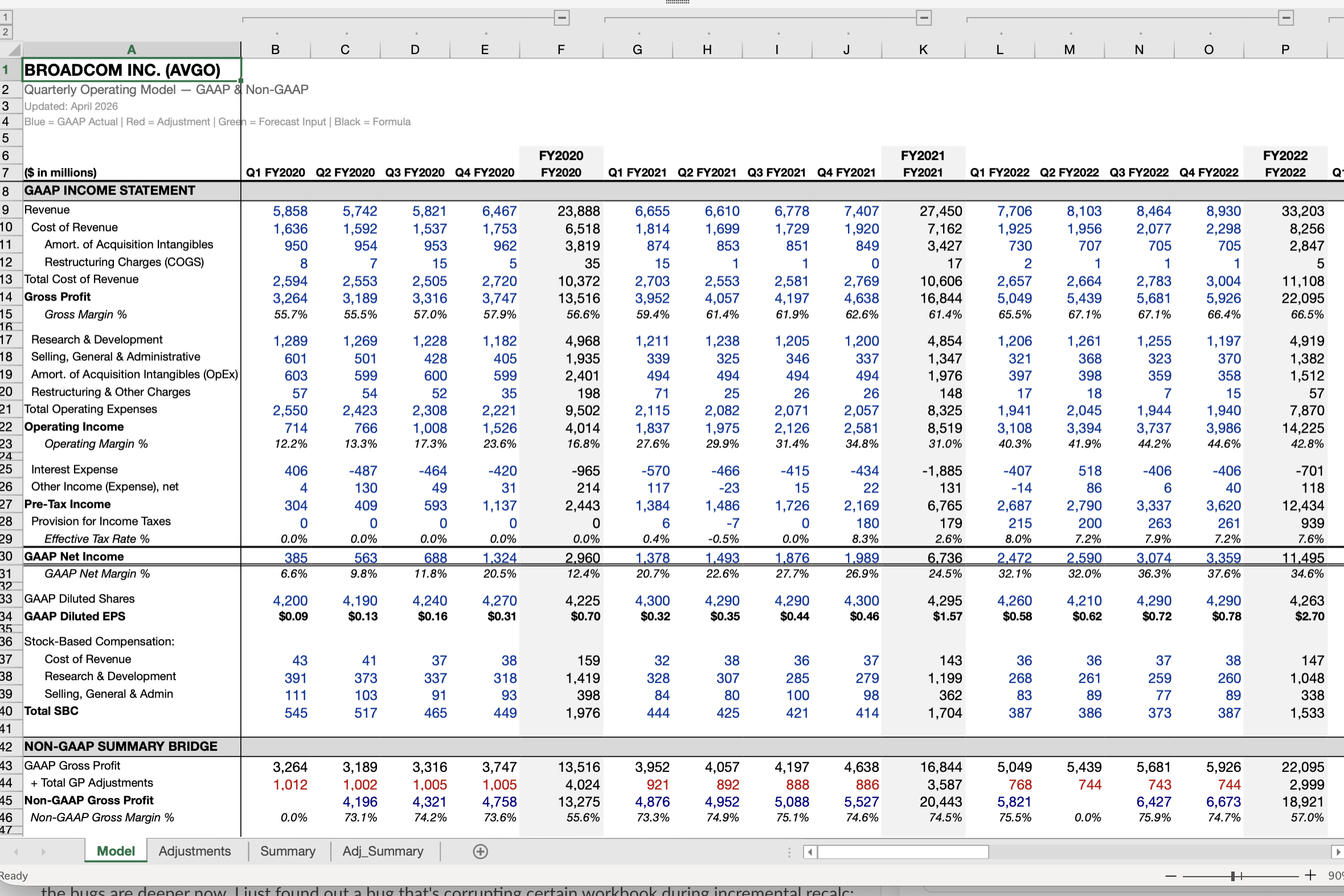

Opus 4.7 and GPT-5.5 are both live in Shortcut. We ran them against GPT-5.4 on two internal benchmarks. v23 is 40+ classic spreadsheet jobs that would take a person 1–4 hours. v25 is 40+ of our hardest — work that would take a person days. Each model ran at three reasoning effort levels (Medium, High, Max) and was scored against a human-graded rubric. Times reported below are total end-to-end — the model's thinking plus every spreadsheet action it takes to finish the job.

A note on speed. Shortcut offers Opus 4.6 in both standard mode and a fast mode that Pro and Teams users can turn on. Fast mode runs about 2.5× faster at the same accuracy. Opus 4.7 runs in standard mode only. The chart below shows both for 4.6 — solid line for standard, dotted line for fast mode, thin connector showing the shift.

1) Highest accuracy

GPT-5.5 · 73.9%

Top score on v25 at Max — tasks that would take a person days. Edges Opus 4.7 Max (72.4%) and GPT-5.4 Max (70.0%).

2) Best formatting

Opus 4.6 · best in class

GPT-5.5 closes most of GPT-5.4's gap, but Opus 4.6 output still looks like a deliverable ready for an MD. Why 4.6 remains the Shortcut default (for now).

3) Fastest*

GPT-5.5 · 12.1 min

Median time on v25 at Max — about half of Opus 4.7 Max (23.3 min). Opus 4.6 fast mode brings 4.6 to ~8.5 min at Max, right there with GPT-5.5.

*Opus 4.6 fast mode isn't available to every Shortcut user, but is meaningfully faster when enabled. Opus 4.7 has no fast mode.

1) Accuracy and speed, together

Speed and accuracy are most useful read together. In standard mode, GPT-5.5 sits at the top-left of the curve on v25 — higher accuracy at every effort level, and faster at Medium and Max. Opus 4.7 trades time for accuracy; at Max it thinks longer to get there. The generational lift on the GPT side is striking: GPT-5.4 at Max scores about what GPT-5.5 scores at Medium.

The dotted line changes the picture. With Opus 4.6 fast mode enabled, the same accuracy arrives in about 40% of the time. The curve shifts sharply left — faster than GPT-5.5 at every effort level, though GPT-5.5 still leads on accuracy. That's the experience Pro and Teams users get when they turn fast mode on. Opus 4.7 has no fast mode, so its curve stays put.

Easy vs. hard

Differences appear in harder tasks.

On everyday tasks, the top models finish within 1.8% of each other. The differences only show up on the hardest, longest work — on v25 the spread widens 4.1× to 7.4%.

v23 · everyday

1.8%

essentially tied

v25 · hardest

7.4%

4.1× wider

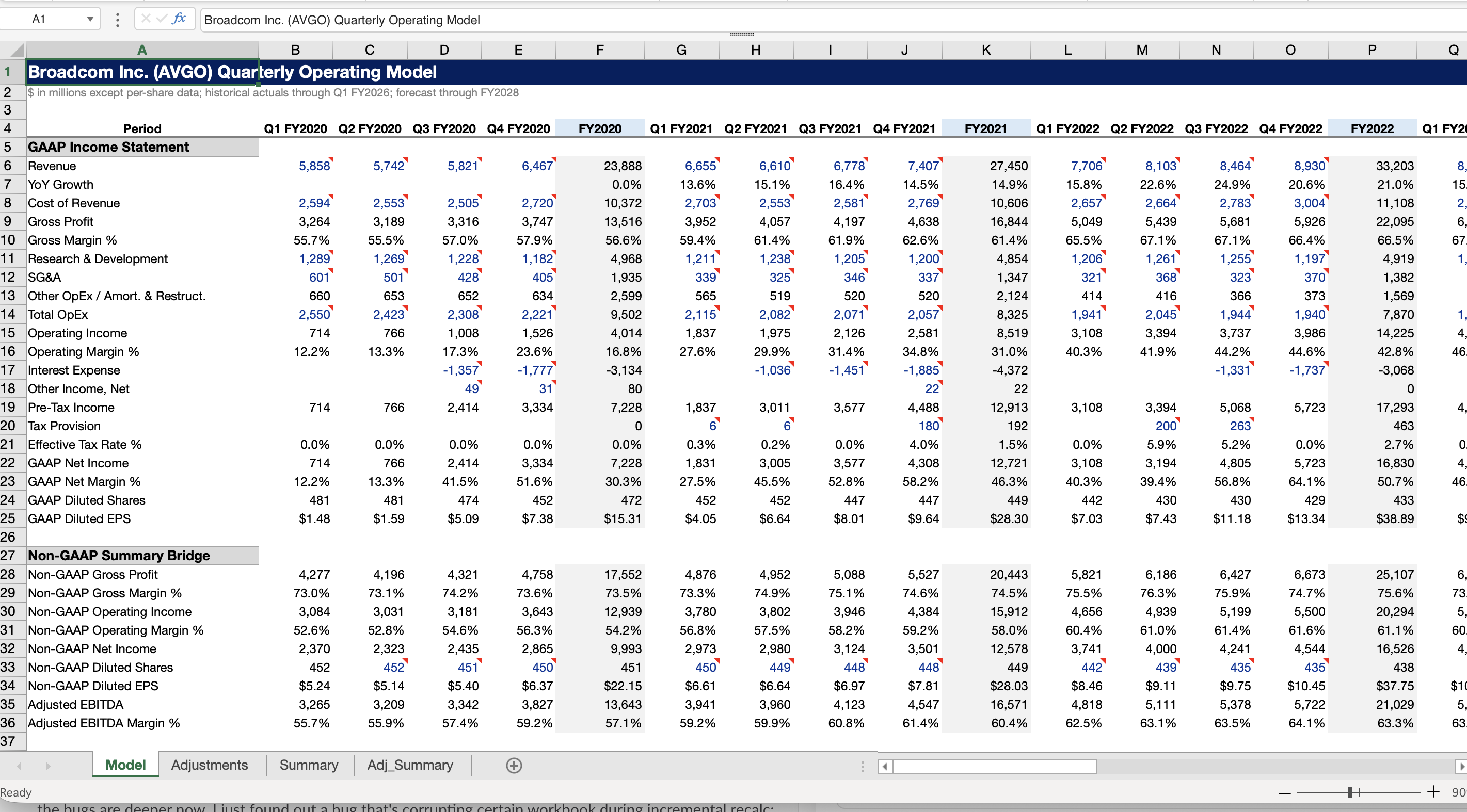

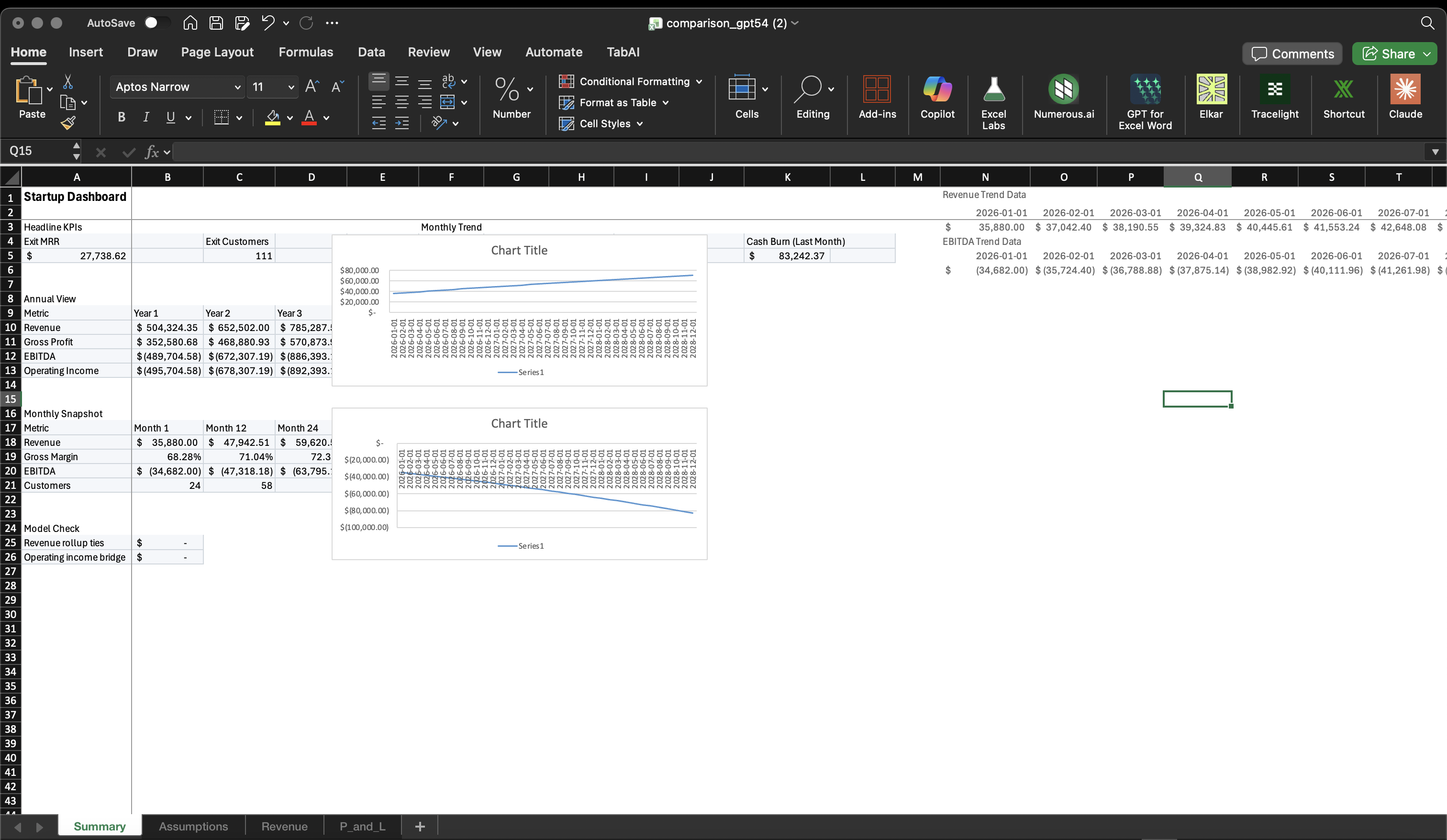

2) Formatting — GPT closes the gap, Opus keeps the crown

Formatting was GPT-5.4's weak spot: correct workbooks that looked rough. GPT-5.5 closes most of that gap — clean headers, consistent number formats, reasonable structure. It still doesn't hit Opus 4.6's bar. Opus 4.6 output looks like a deliverable ready for an MD. GPT-5.5 is solid; Opus 4.6 is best-in-class. Same prompt, same task:

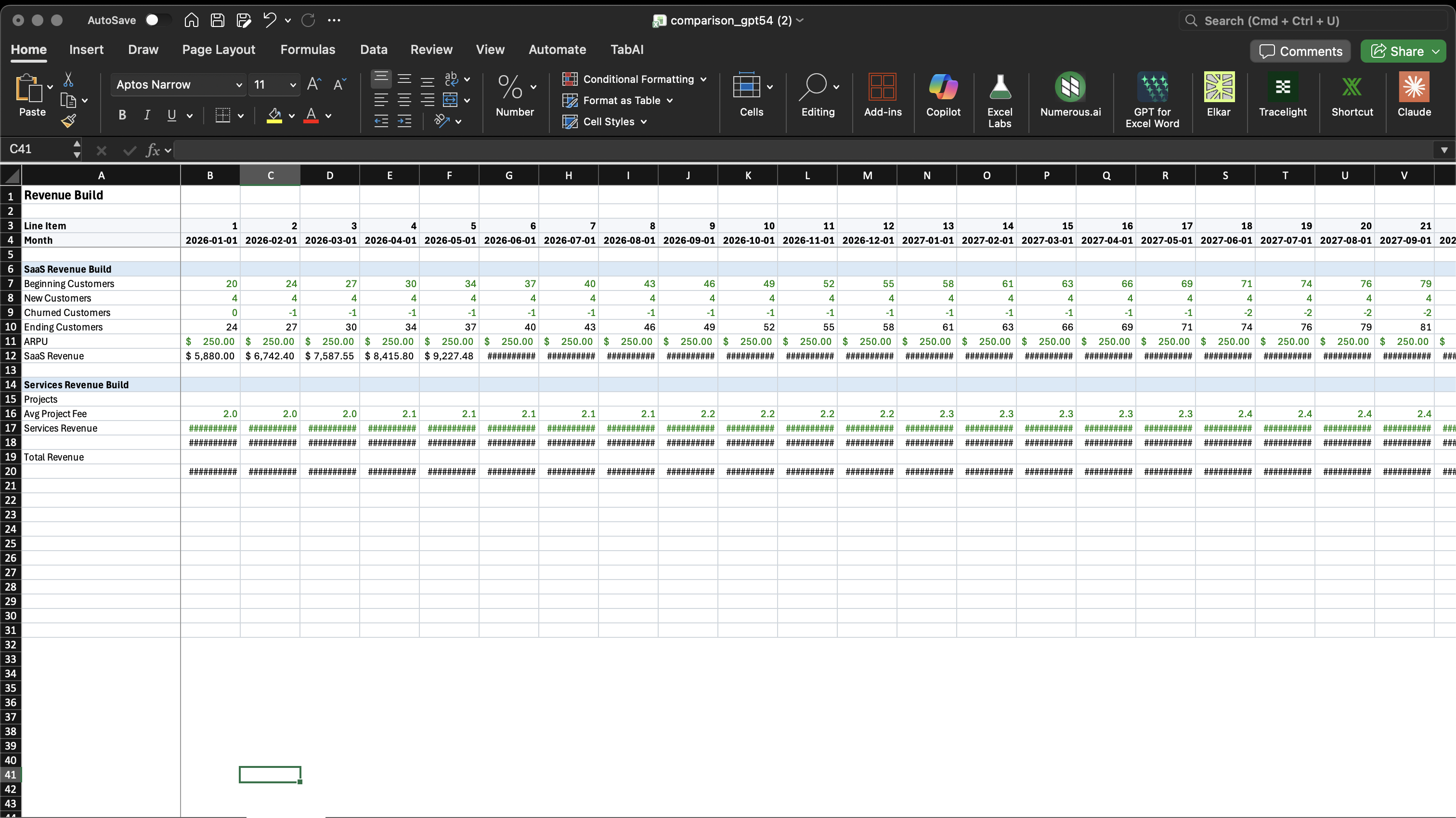

Same prompt, same task — formatting from scratch

3) Two generation jumps, two stories

Same benchmarks, very different generation jumps.

Opus 4.6 → 4.7

A toss-up

4.7 dips slightly on easy tasks (78.7% vs 79.1%) but pulls ahead on hard ones — v25 Medium climbs from 63.0% to 65.3%. Better for long, demanding runs. Opus 4.6 is still the Shortcut default.

GPT-5.4 → 5.5

A clean sweep

Every effort level on v25 picks up 4–7% in accuracy and gets faster — Max drops from 18.4 min to 12.1 min. The leading model on the chart. Still lacks Opus-level formatting taste; feels tight and mechanical where Opus is more considered.

Our recommendation

Frontier models are converging on raw intelligence. What separates them now is look, feel, and taste. Coding went there first. Developers pick between Claude Code and Codex based on which agent they like working with, not which is smarter. GPT-5.5 has a slight edge on the hardest end-to-end tasks, but it's terser and less useful for quick back-and-forth. Opus is the more thoughtful partner.

Give GPT-5.5, Opus 4.7, and Opus 4.6 a diligent try and choose your favorite.

- GPT-5.5 — fire and forget on the hardest work, especially on existing models with clear formatting to maintain and adhere to. 73.9% on v25 in about 12 minutes.

- Opus 4.6 — the Shortcut default for everyday work. Best-in-class formatting, clear step-by-step reasoning, the cleanest day-to-day feel.

- Opus 4.7 — worth getting a feel for. Some people love it, some don't, and there's no obvious intelligence difference over 4.6.

You can switch models on any conversation from the chat input.

Try It Today

Opus 4.7 and GPT-5.5 are live for every Shortcut user. Pick the right model and effort for the task in front of you.